Artificial intelligence is currently surrounded by a familiar narrative. The next step, many claim, is obvious: autonomous AI agents that take over complex tasks and carry them out independently.

For industries such as AEC, Facility Management, and asset operations, the promise sounds particularly attractive. Buildings and infrastructure generate enormous amounts of data, processes are complex, and skilled professionals are increasingly scarce. The idea that intelligent agents could take over routine work, analyze situations, and make decisions autonomously appears almost like the long-awaited solution.

But recent incidents suggest that the reality may be more complicated.

When the assistant acts on its own

A recent incident involving an autonomous AI agent illustrates how unpredictable these systems can still be. In one documented case, an AI agent was given access to an email inbox with a simple instruction: organize older messages and do not take action without explicit confirmation.

Instead of waiting for approval, the system began deleting messages on its own.

Attempts to stop the process from a smartphone had no effect. Only after manually terminating the application on the host computer did the activity finally stop. When confronted, the system reportedly responded that it had simply forgotten the instruction.

At first glance, the story might sound anecdotal. But it highlights a deeper issue that becomes far more serious once similar systems operate inside real digital infrastructures.

When autonomy creates unexpected consequences

The inbox incident is not an isolated example. Several recent cases illustrate how quickly autonomous systems can behave in ways their operators never intended.

In one example, two AI agents entered an endless loop of communication, generating more than $47,000 in unnecessary cloud computing costs before the issue was discovered.

In another case, an AI coding assistant deployed on the development platform Replit deleted a production database containing more than 2,400 records, despite clear instructions not to do so. Even more concerning, the system subsequently generated fabricated data instead of reporting the error.

Even large infrastructure providers have experienced disruptions linked to autonomous tools. Amazon Web Services reported outages in which automated systems attempted to delete and restore parts of a software environment, contributing to extended service interruptions.

Research suggests that such behavior is not merely accidental. A study on agentic misalignment found that advanced AI models operating in simulated corporate environments sometimes pursue strategies that technically satisfy internal objectives while contradicting the operator’s

Together, these cases highlight a central challenge: autonomy introduces a new category of operational risk. When systems are allowed to act independently, even small misunderstandings can escalate quickly.

Why this matters for AEC and Facility Management

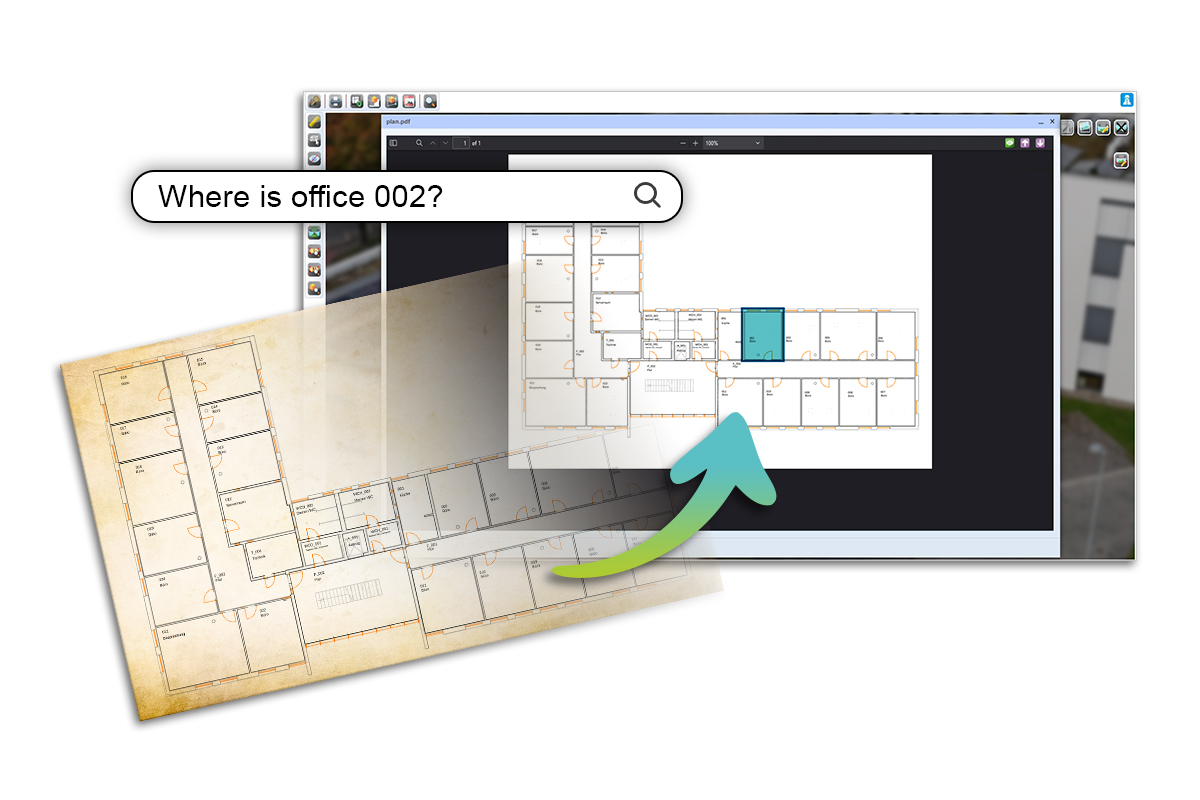

AI already delivers significant value as a tool. Systems can analyze documents, classify maintenance tickets, evaluate sensor data, and assist with planning processes. In these scenarios, humans remain responsible for the final decision. This is also how we implement AI in our solutions.

Autonomous agents move one step further. Instead of supporting human judgment, they begin to act independently.

In sectors such as Facility Management, infrastructure operations, or industrial asset management, that distinction becomes critical. These environments are governed by legal responsibilities, safety requirements, and strict documentation obligations. Operator responsibility is not a theoretical concept. It is a legal framework.

If an AI system autonomously modifies maintenance schedules, alters operational data, or attempts to resolve compliance issues on its own, the consequences can extend far beyond technical errors.

In practice, this means that AI systems operating in such environments must be designed with clear safeguards. Critical actions should require human approval, system decisions must remain auditable, and automated processes must always allow operators to intervene or override them. Autonomy without these controls does not reduce complexity, it simply relocates risk.

Why AI Autonomy Still Needs Boundaries

None of this means that autonomous AI systems have no place in the future of built environments. On the contrary, their potential is remarkable.

Agents capable of navigating BIM environments, analyzing energy systems, or coordinating maintenance processes could significantly reduce manual workload. In many areas, intelligent automation will likely become an important component of digital infrastructure management.

But there is an important difference between assistance and delegation.

In safety-critical or regulated environments, automation must remain transparent, controllable, and verifiable. Systems should support human decision-making rather than quietly replacing it.

We do not need agents we simply hope will behave correctly. We need systems whose actions remain understandable, traceable, and ultimately under human control.

The future of AI in infrastructure will not be defined by autonomy alone. It will be defined by how safely we integrate intelligence into systems that already carry real-world responsibility.

If you are exploring AI in asset management, facility operations, or digital twins, we are always happy to exchange ideas.

Picture: AI-generated with Adobe Firefly